[The following letter to the editor was published in the American Journal of Cardiology in response to an excellent article by George Diamond and Sanjay Kaul who highlighted the limitations of quantitative methods for achieving relevant “risk-stratification” at the individual level. Comments made by these authors prompted me to reflect on the tension between the appeal of quantitative methods and the value of unquantifiable clinical skills. I hope you will find these remarks stimulating.]

In the March 15, 2012, issue of The American Journal of Cardiology, Diamond and Kaul1 provided an insightful analysis of the complex relation between risk stratification schemes and therapeutic decision making. The investigators clearly identified some of the reasons why predicting response to treatment at the individual level is difficult. However, they conclude their report with a caution against “wholesale abandonment of evidence-based guidelines in favor of idiosyncratic clinical judgment,” which, in their opinion, runs the risk of “intellectual gerrymandering” and “wasteful utilization of high-cost technology.”

Proponents of quantitative methods of clinical assessment frequently portray critics as Luddites ready to “jettison” objective evaluation in favor of personal opinion rooted solely in clinical experience.2 This is an unfair characterization. No one is seriously suggesting that knowledge obtained from the analysis of large cohort studies is of no value or should not guide practice. The argument is rather directed at the emphasis placed on “best practice” guidelines that give prominence to outcomes research3 and increasingly serve as the basis to reward or penalize clinical performance.4,5

For one thing, such guidelines presume bland skills on the part of the physician, because risk and outcome quantification in large clinical studies must necessarily amalgamate decisions made by numerous individual doctors. The treatment effect observed is therefore the rendition of the work of a synthetic, “average” clinician.

Integral to the skill of a physician is the ability to grasp a patient’s psychological and socioeconomic makeup and understand how these poorly quantifiable characteristics shape the patient’s preferences and desires. These features are essentially inaccessible to large cohort studies, yet they undoubtedly influence treatment response. Furthermore, it seems obvious that in some cases, deviating from guidelines on the basis of local knowledge can precisely avoid “wasteful utilization” that would arise from the blind application of treatment algorithms.

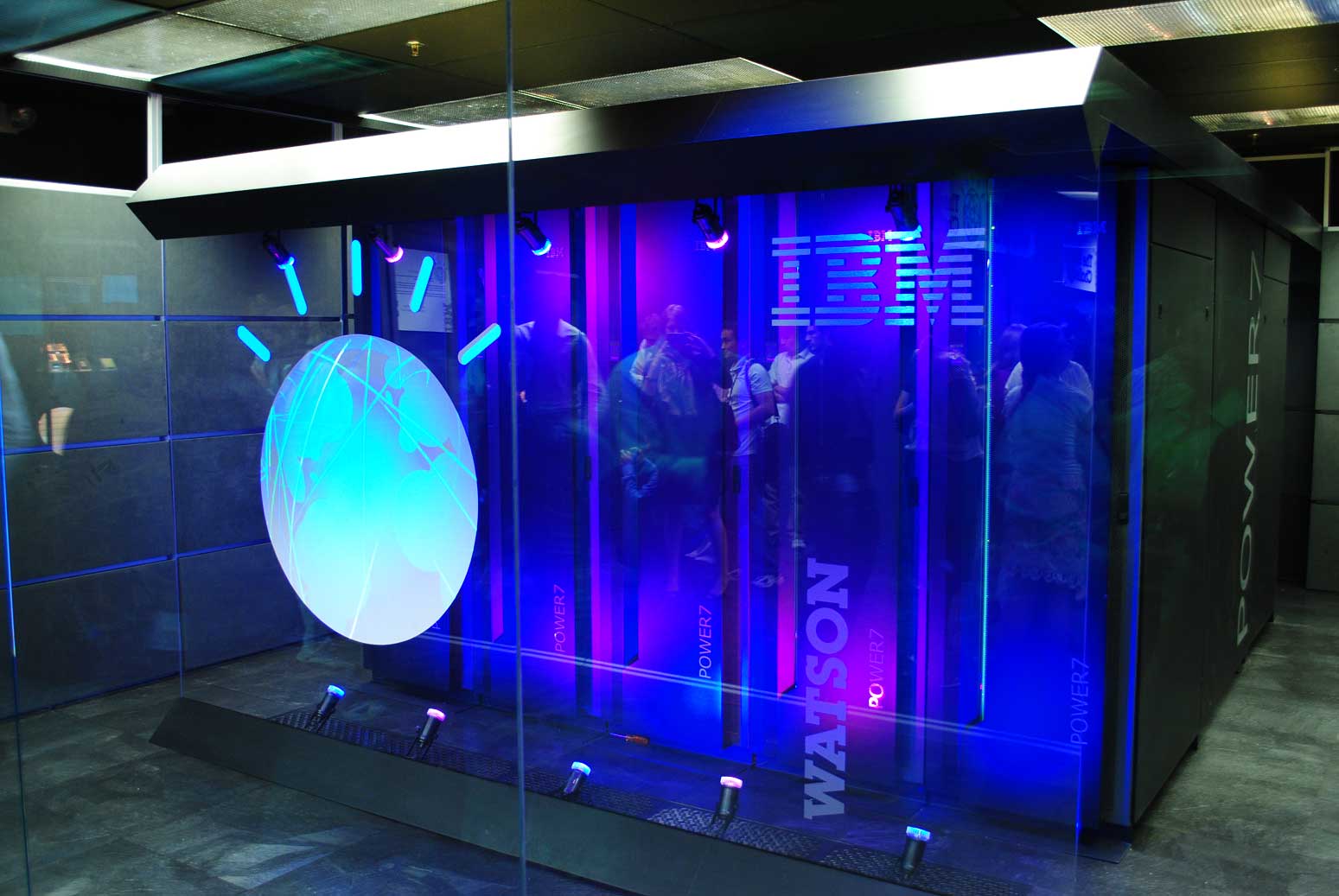

To turn the tables on the expounders of guideline medicine, one could point out that no study has ever prospectively compared outcomes obtained by a given clinician with those achieved by following guideline-derived decision rules. Such a “Kasparov versus Deep Blue” type of experiment is obviously impossible to carry out, but conceptualizing the match helps us recognize what is at stake in the debate.

Quantitative analyses of large clinical studies yield a highly instructive body of knowledge from which doctors should continually draw. However, as Diamond and Kaul1 noted, these complex methods can mislead even the experts. Clinical epidemiologists, guideline authors, and policy makers should not discount the fact that knowledge acquired at the bedside by a skillful clinician can mitigate the difficulties of statistical prediction.

References

1. Diamond GA, Kaul S. By desperate appliance relieved? On the clinical relevance of risk stratification to therapeutic decision-making. Am J Cardiol 2012;109:919 –923.

2. Kent DM, Rothwell PM, Ioannidis JP, Altman, DG, Hayward RA. Assessing and reporting heterogeneity in treatment effects in clinical trials: a proposal. Trials 2010;11:85.

3. Tanenbaum S. What physicians know. N Engl J Med 1993;329:1268 –1271.

4. Berwick DM, DeParle N, Eddy DM, Ellwood PM, Enthoven AC, Halvorson GC, Kizer KW, McGlynn EA, Reinhardt UE, Reischauer RD, Roper WL, Rowe JW, Schaeffer LD, Wennberg JE, Wilensky GR. Paying for performance: Medicare should lead. Health Aff (Millwood) 2003;6:8 –10.

5. Greenberg JO, Dudley JC, Ferris TG. Engaging specialists in performance-incentive programs. N Engl J Med 2010;362:1558 –1560.

[callout]If you enjoy what you read, don’t forget to share the content with your friends so they too can become Alert and Oriented! Also, sign-up at the upper right-hand corner of your browser (or at the bottom of the page on mobile devices) to receive a free monthly digest of all my posts . Thank you![/callout]